The Definitive Guide to AI Governance for Australian Enterprises

Most organisations discover their AI governance gaps when something goes wrong and nobody can establish accountability. This guide maps the seven domains of enterprise AI governance and explains why building governance into procurement from the start is the only approach that reliably works.

Most organisations do not discover their AI governance gaps by anticipating them. They discover them when a model update changes something the organisation relied on, when a privacy question cannot be answered because no audit trail exists, or when an AI-generated output causes a problem and nobody can establish accountability.

By that point, governance is crisis management.

This guide is written for IT, risk, legal, and procurement leaders in Australian organisations who are deploying enterprise AI and need to understand what governance actually requires: not as a compliance exercise, but as an operational discipline that determines whether AI delivers sustained value or accumulates unchecked risk.

Enterprise AI governance is the structured system of controls, accountability, monitoring, and oversight that governs how AI systems are acquired, deployed, and managed within an organisation over time. It spans vendor selection, data handling, access controls, output accountability, model lifecycle management, regulatory compliance, and ongoing operational oversight.

AI governance is not a single control. It is a set of interlocking policies, processes, and accountability structures that determine how AI is acquired, used, monitored, and managed over time. Its scope extends from vendor selection through to daily operational oversight. Organisations that treat governance as a post-deployment concern typically find that the cost of retrofitting controls into an embedded system is substantially higher than the cost of building governance into procurement and deployment from the start.

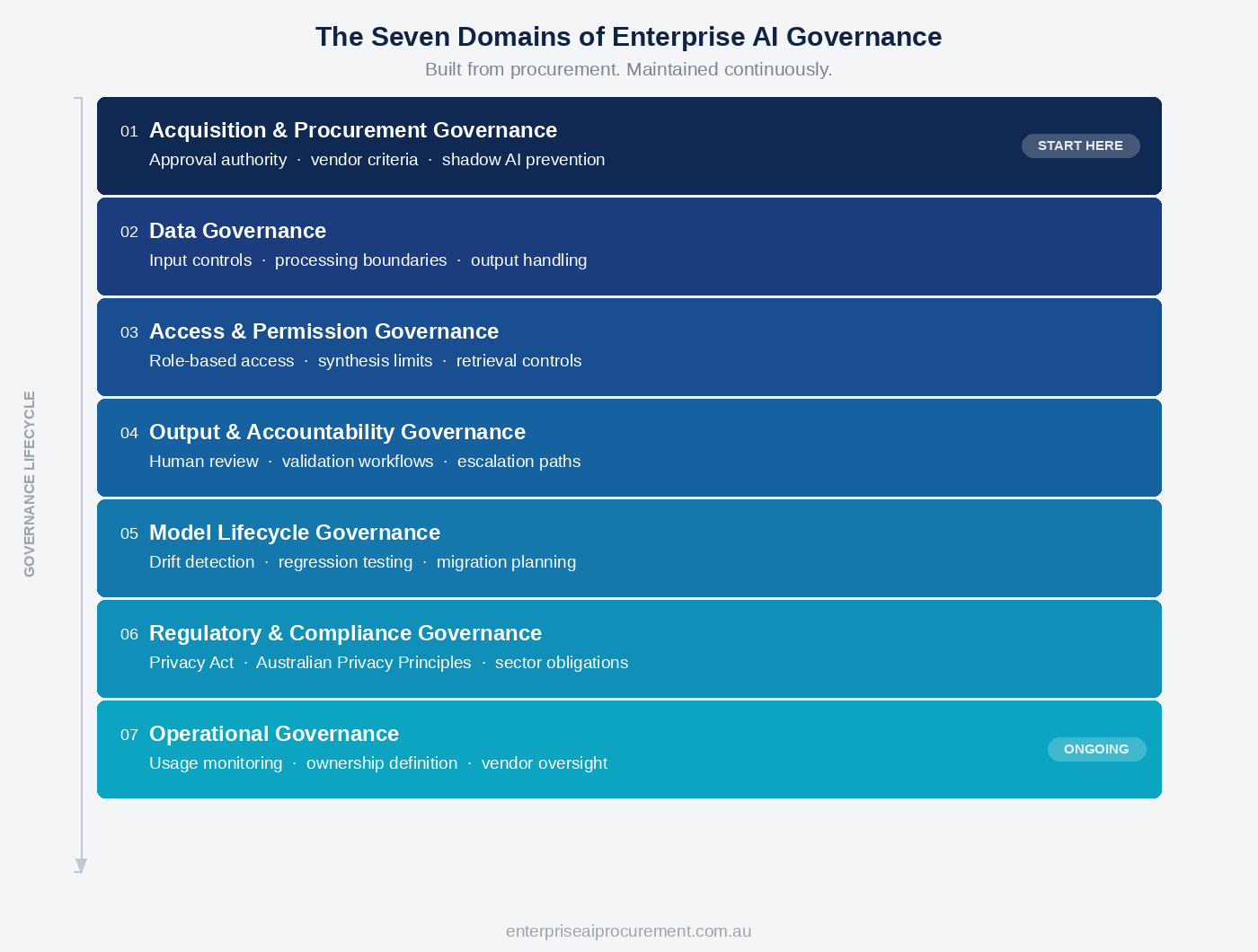

Enterprise AI governance operates across seven domains: acquisition and procurement, data, access and permissions, output and accountability, model lifecycle, regulatory compliance, and operational oversight. Each addresses a distinct category of risk. Together, they form the governance infrastructure that enterprise AI requires.

Why AI Governance Differs From Existing IT Governance

Traditional IT governance was designed for deterministic systems. Software either executes the defined logic or it does not. Outputs can be tested, versioned, and audited against a specification. Behaviour is predictable given the same inputs.

Enterprise AI does not operate this way.

Large language models and AI-enabled platforms produce probabilistic outputs. The same input can generate different outputs depending on model state, context, and configuration. Many vendors update models without requiring explicit customer approval, and those updates can alter output behaviour in ways that affect existing workflows, validations, and decisions.

This breaks three foundational assumptions of traditional IT governance.

The first assumption is that system behaviour is stable between approved changes. AI model updates violate this. A vendor can update the underlying model that generates outputs without issuing a software version number or requiring a change approval process. The system changes. The change management process built around software governance does not detect it.

The second assumption is that accountability is clear. In traditional software, if an error occurs, it is possible to trace whether the software behaved as specified. With AI, outputs are generated probabilistically, liability for AI-generated content is distributed between vendor, organisation, and user, and the error may not be traceable to a single cause. Existing incident management frameworks often lack the mechanisms to investigate AI failures effectively.

The third assumption is that compliance can be validated at procurement. Security certifications and service level agreements establish that a system met requirements at a point in time. AI systems change continuously. A governance framework must assess ongoing compliance, not just initial conformance.

Enterprise AI governance must account for these differences. Applying existing IT governance frameworks without modification typically leaves material risks unaddressed.

The Seven Domains of Enterprise AI Governance

1. Acquisition and Procurement Governance

AI governance begins before deployment. Organisations that establish governance requirements as procurement criteria, rather than as post-deployment additions, avoid the structural problem of embedding ungovernable systems.

Acquisition governance defines which AI systems are permitted within the organisation, who has authority to approve AI procurement, and what baseline requirements all AI vendors must meet before selection. This typically includes data handling standards, security certifications, audit capability, and contractual commitments around training data exclusion.

Without acquisition governance, AI tools proliferate without oversight. Individual teams adopt consumer or departmental AI tools that do not meet enterprise standards. Shadow AI, meaning the use of unapproved AI tools within an organisation, is not primarily a technology problem. It is an acquisition governance failure.

Procurement criteria that include governance capability as a gating requirement change vendor selection outcomes. Vendors that cannot demonstrate audit logging, data residency controls, or administrative governance capability are removed before functional evaluation begins. This is not a conservative approach. It is the disciplined sequencing that the enterprise AI procurement framework requires.

2. Data Governance for AI

AI systems process, generate, and sometimes retain data in ways that create distinct governance obligations.

The data governance concerns specific to AI fall into three categories.

Input data governance addresses what data can be submitted to AI systems. Employees using conversational AI interfaces may introduce customer personal information, commercially sensitive content, or confidential third-party data through prompts, attachments, and workflow integrations. Organisations must define what data may and may not be submitted to AI systems, and ensure that guidance is operationally practical. Prohibitions that cannot be enforced create the appearance of governance without the substance.

Processing and storage governance addresses where data goes once it enters an AI system. Enterprise AI vendors typically offer contractual commitments about data retention and training exclusion. Procurement should require these commitments explicitly and verify that they extend to subprocessors, meaning third parties who may process data on behalf of the primary vendor. Data residency requirements, which are particularly relevant for Australian organisations with obligations under the Australian Privacy Principles, must be confirmed at the infrastructure level, not just the contractual level.

Output data governance addresses AI-generated content. Outputs from AI systems may reproduce information the organisation did not intend to disclose, may contain inaccuracies presented with apparent authority, or may create intellectual property questions. Organisations need policies that govern how AI-generated outputs are labelled, validated, and used.

| Domain | Primary Risk Addressed |

|---|---|

| Acquisition and Procurement | Shadow AI proliferation; embedding systems that cannot be governed |

| Data | Input exposure; subprocessor liability; output misuse |

| Access and Permissions | Unauthorised data synthesis; cross-boundary disclosure |

| Output and Accountability | Unreviewed high-stakes outputs; unresolved liability |

| Model Lifecycle | Undetected behaviour drift; unmanaged model retirement |

| Regulatory and Compliance | Regulatory misalignment; evolving obligation gaps |

| Operational | Ownership ambiguity; unmonitored performance degradation |

3. Access and Permission Governance

Access governance for AI is more complex than for traditional software because AI systems can synthesise and surface information across boundaries that were not explicitly modelled.

A conventional access control framework grants a user access to specific data sources based on their role. An AI system integrated with multiple data sources may synthesise information in ways that reveal insights a user would not have obtained by accessing each source individually. The AI behaves within permission boundaries at the data source level but may create information disclosure risk at the synthesis level.

Effective access governance requires that the AI's retrieval behaviour is bounded by the same permission model that governs direct access. Where knowledge graph structures, retrieval indexing, or agentic capabilities are in use, permission propagation must be verified as part of deployment, not assumed.

Access governance also applies to external-facing AI. Organisations that deploy AI in customer or partner-facing contexts carry obligations that extend beyond internal workforce governance. The AI must not expose data it is not authorised to disclose. Testing this boundary under realistic conditions before deployment is a governance requirement.

When non-functional access and permission requirements are not defined before vendor evaluation begins, organisations discover gaps after selection, at a point when the cost of addressing them is higher. The work of defining those requirements is a core part of what organisations must resolve before vendor engagement.

4. Output and Accountability Governance

AI outputs require human accountability. This is both a practical and a regulatory expectation.

Enterprise AI contracts typically limit vendor liability for AI-generated outputs. Vendors provide the platform. Organisations decide what to do with what the platform generates. In most enterprise contracts, vendors limit liability for AI-generated outputs, meaning practical accountability typically rests with the deploying organisation.

Output governance establishes the controls that make this accountability manageable. This means defining which output types require human review before being acted upon, what validation steps apply to high-stakes outputs, how escalation works when output quality is uncertain, and who has authority to approve AI-generated content for use in specific contexts.

The practical risk of weak output governance is not that AI will produce obviously wrong answers. It is that AI will produce plausible answers that are subtly wrong, and that the workflow does not include a step where a qualified person would detect the error before it matters.

Supervisory controls are the mechanism. They do not require reviewing every AI output. They require designing workflows so that high-stakes outputs are reviewed by someone with the knowledge to assess them, and that the AI is not positioned as the final authority in decisions where errors carry material consequences.

5. Model Lifecycle Governance

Enterprise AI systems do not stabilise after deployment. Vendors update models. Outputs shift. Capabilities change. Features are added or deprecated. Organisations that do not monitor these changes discover their consequences rather than managing them.

Model lifecycle governance requires organisations to establish processes for detecting model changes, assessing their impact, and managing transitions when output behaviour shifts materially.

The core challenge is that model updates are rarely disclosed with the specificity needed to assess impact. A vendor may announce that a new model version has been deployed without specifying how output behaviour has changed for the particular use cases an organisation depends on. Impact assessment requires the organisation to test its own workflows against the updated model, a step that traditional change management processes were not designed to accommodate.

Regression testing for AI workflows is an emerging practice. It requires defining what acceptable output looks like for a representative set of test inputs, then re-running those tests after model updates to detect degradation. This is not feasible for every use case, but it is appropriate for workflows where output consistency is critical: compliance reviews, financial analysis, or any context where errors carry material consequences.

Lifecycle governance also addresses model retirement and migration. Vendors periodically retire older models in favour of newer ones. Organisations that have built workflows around specific model behaviour must plan for migration before it is forced. When migration is reactive rather than planned, the disruption cost, including retesting, reconfiguration, and retraining, is typically higher than it would have been under a managed transition.

Agentic AI deployments, where AI systems take sequential actions with minimal human intervention between steps, require particularly rigorous lifecycle governance. Model updates that alter reasoning behaviour or tool use in agentic workflows can produce material operational consequences with limited visibility. Governance frameworks for agentic AI are addressed in a dedicated cluster article in this series.

6. Regulatory and Compliance Governance

Enterprise AI in Australia operates within a regulatory environment that is developing. Several existing frameworks apply directly. AI-specific regulation is emerging.

The Privacy Act 1988 and the Australian Privacy Principles (APPs) establish the baseline framework for how personal information must be handled by private sector organisations of relevant size. Enterprise AI systems that process personal information, including through prompt inputs, document processing, or automated decision support, must operate within this framework. The organisation, not the vendor, is the entity accountable under the APPs for how personal information is handled by the systems it deploys.

The Australian Government's Voluntary AI Safety Standard, published by the Department of Industry, Science and Resources, provides guidance across ten guardrails for responsible AI deployment. While voluntary at the time of publication, these guardrails are useful as a governance checklist and are widely referenced in public policy discussions, with many expecting them to influence future regulatory approaches. Organisations that implement governance aligned with the standard are better positioned for regulatory evolution that is anticipated over the next several years.

The APS AI Ethics Principles, developed for the Australian Public Service, have become a reference standard for private sector organisations building AI governance frameworks. They address accountability, transparency, fairness, privacy, reliability, and contestability. They are not directly binding on private sector organisations, but they represent the ethical benchmark that regulators, auditors, and boards are increasingly applying.

Sector-specific obligations are the most immediate regulatory constraint for many Australian organisations. Financial services organisations subject to APRA oversight must address AI-related risk within operational risk frameworks. Healthcare providers processing sensitive health information carry obligations under privacy legislation that extend directly to AI-enabled systems. Legal, insurance, and professional services organisations each operate under regulatory contexts that shape what AI governance must address in their specific environments.

Compliance governance for enterprise AI is not a one-time exercise. Regulatory requirements are evolving. Vendor terms change. Organisational AI use typically expands after initial deployment in ways that may trigger additional obligations. A governance framework must include a mechanism for reviewing compliance against current requirements, not just requirements at the time of procurement.

7. Operational Governance After Go-Live

The organisational tendency after deployment is to declare success and move on. Governance responsibility transfers to a team. A budget is allocated. The system runs.

What is missing is the ongoing oversight structure that enterprise AI requires.

Operational governance defines who monitors the AI after go-live, what they are monitoring for, what constitutes a triggering event for review or escalation, and who has authority to take action. It includes usage monitoring, output quality review, licence management, and vendor relationship oversight.

The organisations that manage this effectively treat operational governance as a defined function with explicit resourcing, not as a shared responsibility that everyone assumes someone else is managing. When operational ownership is unclear, governance gaps accumulate without being identified until they produce a visible problem.

Operational governance and value realisation are connected activities. The same usage monitoring that identifies governance concerns also identifies where the AI is delivering value, where adoption is stalling, and where use case design needs refinement. Governance monitoring and performance monitoring draw from the same data and serve the same operational purpose.

AI Governance in the Australian Context

Australian enterprise AI governance must be calibrated to the Australian regulatory environment. Several features of that environment distinguish it from the frameworks that dominate international governance literature, most of which is produced in a US or EU context.

Australia does not currently have a single comprehensive AI regulation equivalent to the EU AI Act. Governance obligations are distributed across privacy law, sector-specific regulation, and voluntary standards. Organisations cannot assume that international compliance frameworks map directly to Australian requirements.

The Australian Privacy Act and the APPs are the most immediately relevant mandatory framework for most private sector organisations. APP 8 addresses cross-border disclosure of personal information, which is relevant wherever AI processing or data storage occurs offshore. APP 11 addresses the security of personal information, which is relevant to how AI systems are configured and monitored. Organisations should not require a specific AI privacy regulation to apply these principles. They apply to AI systems now, through the general obligations the APPs impose on personal information handling.

The federal government's AI governance agenda is developing at pace. The Voluntary AI Safety Standard's ten guardrails, covering areas including risk identification, human oversight, transparency, and accountability, reflect the direction of regulatory expectation. Organisations that treat these as the floor of their governance practice, rather than as aspirational guidance, are better placed for the mandatory requirements that are expected to follow.

For organisations in regulated industries, sector-specific guidance from APRA, ASIC, and the Australian Digital Health Agency provides the most operationally relevant governance requirements. These are the frameworks under which AI deployments will be scrutinised. Understanding them before deployment, not after, is the governance approach that avoids remediation.

Building a Governance Structure: What Good Looks Like

AI governance structures that work share common features. They are not bureaucratic overlays. They are operational functions with clear ownership, defined scope, and practical authority.

Ownership is explicit. Someone is accountable for AI governance. This is not the person who procured the AI or the person who uses it most. It is the person or team responsible for ensuring the governance framework is implemented, monitored, and updated. In larger organisations, this may be a dedicated AI governance function. In smaller organisations, it may sit within IT, legal, or risk. Accountability must be assigned, not assumed.

Policy is practical. Governance policies that cannot be operationalised create compliance risk rather than reducing it. A policy that prohibits staff from submitting personal information to AI systems is useful only if it is accompanied by guidance on what constitutes personal information in practice, and if the AI systems deployed offer usage controls that support enforcement. Policies written without reference to operational reality are not governance. They are liability documentation.

Monitoring is ongoing. Enterprise AI governance is not a deployment checklist. It requires recurring review of usage patterns, output quality, vendor compliance, regulatory developments, and organisational changes that affect AI risk. The frequency and scope of monitoring should be proportionate to the risk profile of the AI in use.

Escalation is defined. When governance monitoring identifies a problem, whether an output quality incident, a model update that has altered critical workflows, or a data handling concern, the escalation path must be clear. Who is informed? Who has authority to act? What is the response timeline? Governance structures that lack defined escalation processes typically produce slow, inconsistent responses that allow problems to compound.

Governance informs procurement. The governance framework must connect to the procurement process. Requirements that emerge from operational governance experience, including insights about vendor behaviour, contractual gaps, and data handling shortfalls, should feed back into future procurement decisions and contract renewals. Governance that does not influence procurement eventually becomes disconnected from the risk it is meant to manage.

Governance as a Procurement Requirement, Not a Post-Deployment Addition

The structural mistake most organisations make with AI governance is treating it as a post-deployment concern. Governance frameworks are built after deployment because the procurement process did not require governance capability as a vendor selection criterion.

The consequence is that organisations end up with AI systems that are not fully governable. Platforms that do not provide adequate audit logging. Systems that do not support the access controls needed for compliance. Vendors that do not disclose model update schedules in ways that enable lifecycle management. Contracts that do not offer protections adequate for the organisation's regulatory environment.

Retrofitting governance capability into an embedded system is often expensive and in some cases operationally impractical. Requiring governance capability during procurement is a selection criterion. Vendors that cannot meet it are removed before functional evaluation begins. This is the same principle that applies to non-functional requirements in a well-structured enterprise AI RFP.

The enterprise AI RFP blueprint addresses how governance requirements translate into RFP structure and evaluation weighting. The total cost of ownership framework addresses the cost implications of governance, including how governance tooling, audit infrastructure, and operational oversight contribute to the true cost of AI deployment. Governance is not separate from commercial and procurement decision-making. It is a dimension of both.

The Governance Cluster: What This Guide Connects To

This guide provides the framework. The cluster articles in this series address each major governance domain in operational depth:

- Enterprise AI governance frameworks for Australian organisations: The Australian-specific framework structure, including how to map governance requirements to the APPs and the Voluntary AI Safety Standard

- AI governance for enterprise model lifecycle management: How to build detection, testing, and migration processes for model updates in production environments

- Agentic AI governance: risk and strategy for enterprise deployments: The specific governance challenges created by autonomous AI agents, including approval workflows, action boundaries, and failure mode management

- The cost of enterprise AI governance: what to budget for: How governance tooling, internal labour, and operational oversight contribute to total cost of ownership

- Enterprise AI governance structure: roles and responsibilities: Who owns AI governance inside the organisation, how accountability is structured across executive, operational, and technical layers, and how to design the operating model before deployment begins

Each cluster article links back to this guide. Pillar pages are updated as cluster articles are published.

The Governance Imperative

Enterprise AI without governance is an unmanaged risk. The outputs exist. The data moves. The models change. The decisions are made. But without the controls, accountability structures, and monitoring mechanisms that governance provides, organisations cannot assess whether the AI is performing within acceptable boundaries, cannot investigate when it does not, and cannot demonstrate to regulators, auditors, or customers that it is being operated responsibly.

Australian enterprises deploying AI in 2026 operate in a regulatory environment that is moving toward greater accountability for AI-related risk. The organisations building governance structures now are not doing compliance theatre. They are building the operational infrastructure that will determine whether their AI investment remains viable as regulatory and commercial expectations increase.

The question is not whether enterprise AI requires governance. It always has. The question is whether the organisation builds that governance into procurement and deployment, or discovers the need for it through problems it could have prevented.

This article provides general commercial and procurement commentary only and does not constitute legal, financial, regulatory, or professional advice. Organisations should obtain independent advice appropriate to their specific circumstances.